Build a Semantic Search Engine from Scratch with Python

What Is Semantic Search and How Does It Work?

Semantic search finds documents by meaning rather than keyword overlap. Text is converted into dense vector embeddings using a sentence-transformer model, then stored in a vector database. When a query arrives, it is embedded using the same model and the system returns documents whose vectors are closest in meaning - measured by cosine similarity. Queries and results do not need to share any words.

Keyword search has a fundamental flaw: it matches words, not meaning. Your users do not search for keywords - they describe what they need. A user who types "how to cancel my account" is looking for the same article as one who types "steps to close my subscription." Keyword search misses one of those. Semantic search matches both.

In this project you will build a complete semantic search engine from scratch. By the end you will have a working system that can ingest documents from text files, chunk and embed them, store embeddings in ChromaDB, and expose a clean Python search interface that returns results ranked by meaning - not by keyword overlap.

This is a standalone project. If you want to understand the underlying theory before diving in, read What is a Vector Database? and ChromaDB Tutorial first.

What You Will Build

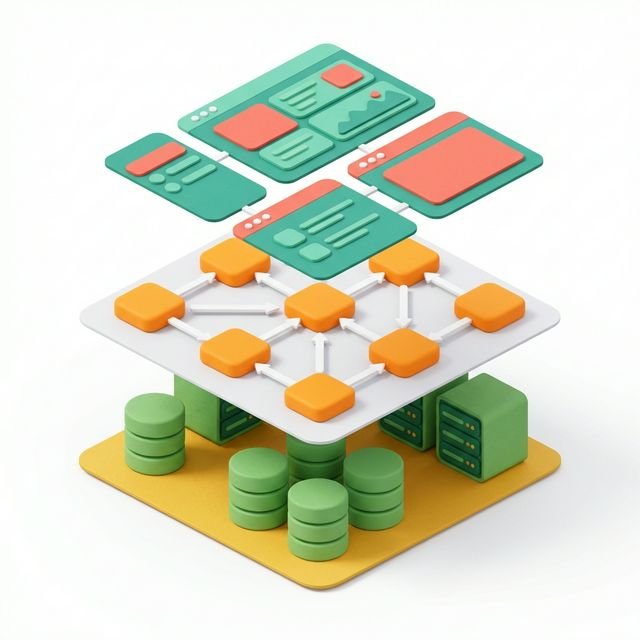

A five-component semantic search system:

- Document loader: reads text files or plain strings into a standard format

- Text chunker: splits long documents into overlapping chunks suitable for embedding

- Embedding pipeline: converts chunks to vector embeddings using a local model

- Vector store: persists embeddings in ChromaDB with metadata

- Search interface: accepts natural-language queries and returns ranked results

Prerequisites

pip install chromadb sentence-transformersPython 3.10 or later. No API keys required - everything runs locally.

Step 1: Document Loader

Start with a clean data model. Every document in the system has a source, content, and metadata.

# semantic_search/loader.py

from dataclasses import dataclass, field

from pathlib import Path

@dataclass

class Document:

content: str

source: str

metadata: dict = field(default_factory=dict)

class DocumentLoader:

"""Load documents from text strings or .txt files."""

@staticmethod

def from_texts(texts: list[str], source: str = "inline") -> list[Document]:

return [

Document(content=text.strip(), source=source, metadata={"index": i})

for i, text in enumerate(texts)

if text.strip()

]

@staticmethod

def from_file(path: str | Path) -> list[Document]:

path = Path(path)

if not path.exists():

raise FileNotFoundError(f"File not found: {path}")

content = path.read_text(encoding="utf-8")

return [Document(content=content, source=str(path))]

@staticmethod

def from_directory(directory: str | Path, extension: str = ".txt") -> list[Document]:

directory = Path(directory)

documents = []

for file_path in sorted(directory.glob(f"**/*{extension}")):

content = file_path.read_text(encoding="utf-8").strip()

if content:

documents.append(Document(

content=content,

source=str(file_path),

metadata={"filename": file_path.name, "stem": file_path.stem}

))

return documentsStep 2: Text Chunker

Long documents can exceed embedding model context limits (typically 256-512 tokens for most sentence-transformers). Chunking splits documents into smaller, overlapping segments. The overlap ensures that sentences split across a chunk boundary are represented in at least one complete chunk.

# semantic_search/chunker.py

from .loader import Document

@dataclass

class Chunk:

text: str

source: str

chunk_index: int

total_chunks: int

metadata: dict = field(default_factory=dict)

class TextChunker:

"""

Split documents into overlapping fixed-size character chunks.

chunk_size: target chunk size in characters (~500 chars ≈ 100-150 tokens)

chunk_overlap: overlap between consecutive chunks in characters

"""

def __init__(self, chunk_size: int = 500, chunk_overlap: int = 100):

if chunk_overlap >= chunk_size:

raise ValueError("chunk_overlap must be smaller than chunk_size")

self.chunk_size = chunk_size

self.chunk_overlap = chunk_overlap

def chunk(self, document: Document) -> list[Chunk]:

text = document.content

chunks = []

start = 0

step = self.chunk_size - self.chunk_overlap

while start < len(text):

end = start + self.chunk_size

chunk_text = text[start:end].strip()

if chunk_text:

chunks.append(Chunk(

text=chunk_text,

source=document.source,

chunk_index=len(chunks),

total_chunks=0, # filled in below

metadata={**document.metadata}

))

start += step

# Set total_chunks now that we know the count

for chunk in chunks:

chunk.total_chunks = len(chunks)

return chunks

def chunk_all(self, documents: list[Document]) -> list[Chunk]:

all_chunks = []

for doc in documents:

all_chunks.extend(self.chunk(doc))

return all_chunksChoosing Chunk Size

For support articles or documentation (dense, factual content): 400-600 characters. For narrative text or long-form articles: 800-1000 characters. The overlap (100-150 characters) ensures context at boundaries is not lost. Smaller chunks improve retrieval precision; larger chunks give the LLM more context per result.

Step 3: Vector Store Wrapper

Wrap ChromaDB with a clean interface that knows nothing about the chunker or loader:

# semantic_search/store.py

import hashlib

import chromadb

from chromadb.utils import embedding_functions

from .chunker import Chunk

class VectorStore:

"""

ChromaDB-backed vector store for document chunks.

Handles ID generation, upserts, and structured queries.

"""

def __init__(

self,

persist_path: str = "./search_index",

collection_name: str = "semantic_search",

model_name: str = "all-MiniLM-L6-v2",

):

self.client = chromadb.PersistentClient(path=persist_path)

self.ef = embedding_functions.SentenceTransformerEmbeddingFunction(

model_name=model_name

)

self.collection = self.client.get_or_create_collection(

name=collection_name,

embedding_function=self.ef,

metadata={"hnsw:space": "cosine"},

)

@staticmethod

def _make_chunk_id(chunk: Chunk) -> str:

"""Stable ID: hash of (source + chunk_index)."""

key = f"{chunk.source}::{chunk.chunk_index}"

return hashlib.sha256(key.encode()).hexdigest()[:20]

def add_chunks(self, chunks: list[Chunk]) -> int:

"""Add or update chunks in the vector store. Returns number added."""

if not chunks:

return 0

ids = [self._make_chunk_id(c) for c in chunks]

texts = [c.text for c in chunks]

metadatas = [

{

"source": c.source,

"chunk_index": c.chunk_index,

"total_chunks": c.total_chunks,

**c.metadata,

}

for c in chunks

]

self.collection.upsert(ids=ids, documents=texts, metadatas=metadatas)

return len(chunks)

def count(self) -> int:

return self.collection.count()

def search(

self,

query: str,

n_results: int = 5,

filters: dict | None = None,

min_similarity: float = 0.0,

) -> list[dict]:

"""

Run semantic search.

Returns results as dicts with keys: text, similarity, source, metadata.

Optionally filter by minimum similarity threshold.

"""

actual_n = min(n_results * 2, self.collection.count())

if actual_n == 0:

return []

kwargs = {

"query_texts": [query],

"n_results": actual_n,

"include": ["documents", "distances", "metadatas"],

}

if filters:

kwargs["where"] = filters

raw = self.collection.query(**kwargs)

results = []

for text, dist, meta in zip(

raw["documents"][0],

raw["distances"][0],

raw["metadatas"][0],

):

similarity = round(1 - dist, 4)

if similarity >= min_similarity:

results.append({

"text": text,

"similarity": similarity,

"source": meta.get("source", ""),

"chunk_index": meta.get("chunk_index", 0),

"metadata": meta,

})

# Sort by similarity and trim to requested n

results.sort(key=lambda r: r["similarity"], reverse=True)

return results[:n_results]

def delete_source(self, source: str) -> None:

"""Remove all chunks from a specific source document."""

self.collection.delete(where={"source": source})Step 4: Search Engine - Putting It All Together

# semantic_search/engine.py

from .loader import Document, DocumentLoader

from .chunker import TextChunker

from .store import VectorStore

class SemanticSearchEngine:

"""

Complete semantic search engine: ingest -> chunk -> embed -> search.

"""

def __init__(

self,

persist_path: str = "./search_index",

collection_name: str = "semantic_search",

chunk_size: int = 500,

chunk_overlap: int = 100,

model_name: str = "all-MiniLM-L6-v2",

):

self.loader = DocumentLoader()

self.chunker = TextChunker(chunk_size=chunk_size, chunk_overlap=chunk_overlap)

self.store = VectorStore(

persist_path=persist_path,

collection_name=collection_name,

model_name=model_name,

)

def ingest_texts(self, texts: list[str], source: str = "inline") -> dict:

docs = DocumentLoader.from_texts(texts, source=source)

return self._ingest(docs)

def ingest_file(self, path: str) -> dict:

docs = DocumentLoader.from_file(path)

return self._ingest(docs)

def ingest_directory(self, directory: str, extension: str = ".txt") -> dict:

docs = DocumentLoader.from_directory(directory, extension=extension)

return self._ingest(docs)

def _ingest(self, documents: list[Document]) -> dict:

if not documents:

return {"documents": 0, "chunks": 0}

chunks = self.chunker.chunk_all(documents)

added = self.store.add_chunks(chunks)

return {"documents": len(documents), "chunks": added}

def search(

self,

query: str,

n_results: int = 5,

filters: dict | None = None,

min_similarity: float = 0.3,

) -> list[dict]:

return self.store.search(

query=query,

n_results=n_results,

filters=filters,

min_similarity=min_similarity,

)

def stats(self) -> dict:

return {"total_chunks": self.store.count()}Step 5: Run It - Full Working Demo

# demo.py

from semantic_search.engine import SemanticSearchEngine

engine = SemanticSearchEngine(persist_path="./search_index")

# -- Ingest documents ----------------------------------------------

result = engine.ingest_texts(

texts=[

"""Password Reset Guide

If you are locked out of your account, go to the login page and click

'Forgot Password'. Enter your email address and you will receive a reset

link within 5 minutes. The link expires after 24 hours. If you do not

receive the email, check your spam folder or contact support.""",

"""Device Boot Failure After Update

Some users have reported that their device fails to boot following a

firmware update. To resolve this: hold the power button for 10 seconds

to force shutdown, then restart normally. If the device still fails to

boot, enter recovery mode by holding Power + Volume Down for 15 seconds.""",

"""Subscription Cancellation Policy

You may cancel your subscription at any time from the Account Settings

page. Cancellation takes effect at the end of the current billing period.

You will not be charged for the next period. Refunds are not available

for partial billing periods.""",

"""Invoice and Billing History

Your full billing history is available under Account > Billing > Invoices.

Each invoice can be downloaded as a PDF. For team and enterprise accounts,

invoices are automatically emailed to the billing contact on record.""",

"""Battery Life Optimisation

If your battery drains faster than expected after a software update,

try the following: disable background app refresh, reduce screen

brightness, and toggle aeroplane mode briefly to reset radio connections.

A calibration cycle - full discharge followed by a full charge - often

resolves post-update battery drain.""",

],

source="support_kb"

)

print(f"Ingested: {result}")

# -- Search --------------------------------------------------------

queries = [

"my laptop won't start after the update",

"how do I get my password back",

"will I get charged if I stop subscribing",

"battery stops working quickly",

]

for query in queries:

print(f"\nQuery: '{query}'")

results = engine.search(query, n_results=2, min_similarity=0.3)

for r in results:

print(f" [{r['similarity']:.3f}] {r['text'][:90]}...")Expected output:

Ingested: {'documents': 5, 'chunks': 5}

Query: 'my laptop won't start after the update'

[0.8123] Device Boot Failure After Update Some users have reported that their device fails...

[0.5891] Battery Life Optimisation If your battery drains faster than expected after a so...

Query: 'how do I get my password back'

[0.8741] Password Reset Guide If you are locked out of your account, go to the login page...

[0.4102] Subscription Cancellation Policy You may cancel your subscription at any time fr...

Query: 'will I get charged if I stop subscribing'

[0.8934] Subscription Cancellation Policy You may cancel your subscription at any time fr...

[0.4211] Invoice and Billing History Your full billing history is available under Account...

Query: 'battery stops working quickly'

[0.8567] Battery Life Optimisation If your battery drains faster than expected after a so...

[0.5213] Device Boot Failure After Update Some users have reported that their device fail...Every result is found through meaning, not keywords. The query "will I get charged if I stop subscribing" contains none of the words in the matching document - it finds it through semantic similarity alone.

Step 6: Chunking Long Documents

To see chunking in action, try a longer document:

long_article = """

The Python programming language was created by Guido van Rossum and first

released in 1991. Python's design philosophy emphasises code readability,

and its syntax allows programmers to express concepts in fewer lines of code

than would be possible in languages such as C++ or Java.

Python supports multiple programming paradigms, including structured,

object-oriented, and functional programming. It features a dynamic type

system and automatic memory management.

Python is widely used in web development, data science, artificial intelligence,

scientific computing, and automation. It is consistently ranked as one of the

most popular programming languages in the world.

The Python Package Index (PyPI) hosts thousands of third-party modules for

Python. Both the Python standard library and the community-contributed modules

allow for endless possibilities for developers.

"""

engine.ingest_texts([long_article], source="python_intro")

results = engine.search("who made Python and when", n_results=2)

for r in results:

print(f"[{r['similarity']:.3f}] [{r['source']}] (chunk {r['chunk_index']}) {r['text'][:100]}...")The chunker splits this 800-character document into two overlapping chunks. Both are indexed. The query "who made Python and when" retrieves the chunk containing the creation details with high similarity.

Step 7: Re-indexing and Document Updates

The engine handles content updates cleanly because it uses content-derived IDs:

# Update a document - re-ingest with the same source

engine.ingest_texts(

texts=[

"""Password Reset Guide (Updated April 2026)

To reset your password: visit login page, click 'Forgot Password',

enter your registered email. Reset link valid for 48 hours (extended from 24).

SMS verification required for accounts with 2FA enabled."""

],

source="support_kb_v2"

)

# The old source still exists - delete it explicitly if needed

engine.store.delete_source("support_kb")In a production ingestion pipeline, track a document's source identifier and call delete_source() before re-ingesting when the source content changes.

Adding a Simple REST API with FastAPI

To expose your search engine as an HTTP endpoint:

pip install fastapi uvicorn# api.py

from fastapi import FastAPI, HTTPException

from pydantic import BaseModel

from semantic_search.engine import SemanticSearchEngine

app = FastAPI(title="Semantic Search API")

engine = SemanticSearchEngine(persist_path="./search_index")

class SearchRequest(BaseModel):

query: str

n_results: int = 5

min_similarity: float = 0.3

class IngestRequest(BaseModel):

texts: list[str]

source: str = "api"

@app.post("/search")

def search(request: SearchRequest):

if not request.query.strip():

raise HTTPException(status_code=400, detail="Query cannot be empty")

results = engine.search(

request.query,

n_results=request.n_results,

min_similarity=request.min_similarity,

)

return {"query": request.query, "results": results}

@app.post("/ingest")

def ingest(request: IngestRequest):

if not request.texts:

raise HTTPException(status_code=400, detail="texts list cannot be empty")

result = engine.ingest_texts(request.texts, source=request.source)

return result

@app.get("/stats")

def stats():

return engine.stats()uvicorn api:app --reloadTest it:

curl -X POST http://localhost:8000/search \

-H "Content-Type: application/json" \

-d '{"query": "reset my password", "n_results": 3}'Project File Structure

semantic_search/

+-- __init__.py

+-- loader.py ← Document loading

+-- chunker.py ← Text splitting

+-- store.py ← ChromaDB wrapper

+--- engine.py ← Main orchestrator

demo.py ← Console demo

api.py ← FastAPI REST endpoint

search_index/ ← ChromaDB persistent storage (auto-created)Key Takeaways

- Semantic search finds documents by meaning, not keywords - queries and documents do not need to share words

- Chunking is essential for long documents - split with overlap to avoid losing context at boundaries

- Content-derived IDs (hashed source + index) make your ingestion pipeline safely idempotent

- Setting a min_similarity threshold (0.3-0.4) filters out irrelevant low-confidence results

- The same engine can be extended to support PDF ingestion (add pypdf2), web scraping (add BeautifulSoup), or multiple languages (swap to a multilingual sentence-transformers model)

- Wrapping the engine with FastAPI creates a production-ready semantic search microservice in under 30 lines

What's Next in the Vector Database Series

- Next post: Vector Database Optimisation for Production - Chunking Strategies, Index Tuning, and Scaling

This post is part of the Vector Database Series. Previous post: ChromaDB vs Pinecone vs pgvector: Which Vector Database Should You Use?.

To add a Claude-powered Q&A layer on top of this search engine, see Claude RAG: Retrieval Augmented Generation. For a comparison of vector database options for scaling this project, see What Is a Vector Database? and ChromaDB Tutorial for Beginners.

Scale MongoDB for Production Workloads

If your semantic search system uses MongoDB as the document store alongside the vector index:

- MongoDB Indexes Guide(Coming soon) — create compound, text, and sparse indexes to keep queries fast at scale

- MongoDB Security Guide(Coming soon) — authentication, role-based access control, and encryption at rest

- MongoDB in Production(Coming soon) — replication, monitoring, connection pooling, and operational patterns

Expand Your Database Skills

Vector search is one data storage pattern. For a broader understanding of how document databases work alongside vector stores:

- What Is NoSQL? Database Models Compared(Coming soon) — understand when to reach for a document DB vs a relational DB vs a vector store

- MongoDB Querying Guide(Coming soon) — deep dive into document query patterns that complement vector similarity search

External Resources

- Sentence-Transformers documentation - the official site for the embedding models used in this project, with model comparison and benchmarks.

- ChromaDB documentation - the official guide for the vector store used for persistence and similarity search.

- all-MiniLM-L6-v2 on Hugging Face - the specific embedding model used in this project; fast, accurate, and free for commercial use.